There are literally thousands of combinations of ways to set up the VMs and containers. Proper resource management is critical to getting it to perform at expectations. Having additional resources available (memory, cpu cores, etc) makes it a lot easier. You want the smallest amount of VMs as needed to get the job done, since this requires finite resource allocation. There is also a security barrier that a VM gives you beyond a container, but once again you want the smallest amount.

This is fine. From an OS manageability standpoint I recommend Ubuntu for the virtual machines and thus HA in Docker. If you want HassOS you need to use Debian for one of the VMs, since Ubuntu is based on Debian there will be very little difference in shell commands, update intervals, and available software.

Within each VM I recommend Webmin for browser based management beyond the shell, and having the same SSH public key inside each VM so you only need 1 private key for managing your network infrastructure. I have my key on a Yubikey, when I login to a system I just need to tap it and I’m in.

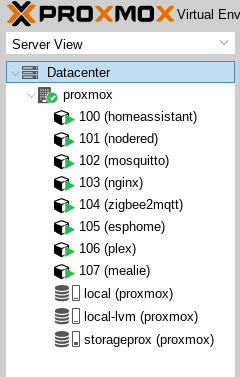

I would separate the VMs into “function”, by that I mean network services/management, home automation, other services, and so on. The purpose is better resource management at the hypervisor level, better system management at the OS level, better network management at the Docker level, and several other reasons which usually only become clear after using the system for a long time. Each VM gets its own network IP address (routed config).

For network services, this includes Mosquitto, NTP, DNS, Samba/NFS servers. This VM would not use Docker, it would need minimal ram and cpu resources, but high priority and availability. You could also run these on the hypervisor, but putting things that need regular updates on that can force reboots that take the whole system and all VMs down.

For home automaton, this includes, HA, SQL, Node-Red, Influx, Grafana. This would have most items in their own Docker instances within a single network, all talking to eachother inside Docker. This would need a lot of resources, databases like to eat ram, and the multitude of services needs cpu.

For other services, this includes Plex, video surveillance applications, Rhasspy, Duplicati, etc. This may be a mix of native programs and Docker, and will use more resources as services are added.

MariaDB is a fork of MySQL which has since diverged. I use MySQL, since that is what I prefer, performance tuning an SQL server can be a challenge, but is easier to manage and backup if you have the tools and know what you are doing.

Learn from the mistakes of others, including myself, make backup a central part of this plan. Proxmox can replicate/backup the VMs to another system. Do this on a regular schedule, and test the backup process just as often. So many people find out their backup plan is crap only when their system takes a crap, or that it was not properly configured to backup the right data.

Backup the service configuration files of Proxmox and each VM to 2 locations on a daily basis.

One should be on the system, Samba or NFS share from the services VM is fine, as long as they are in one easy to manage location. This can be a delta backup, so only when something changes does it use more space, and since it is config files only it is quite small, usually only a few MB. Use sqldump to export the HA database and any other critical databases at the same time. The other copy of these can just be cloned to another system.

Replicate the entire VMs to a physically different local system every week. This can be considered a hot spare that can be replicated back to the NUC if something goes horribly wrong with Proxmox, or the drive fails, or if the NUC needs to be replaced due to damage.

Backup that system to an external drive once a month and keep that in a fire safe.

Try to restore from the external drive to a Proxmox VM on a laptop or other system to make sure it works before locking it up.

When you do weekly replication, upload the current daily backup to an offsite cloud backup provider such as Backblaze. Duplicati does this quite well and will encrypt it before hand. Once the backup process is configured, it is pretty much set and forget unless you add more services or VMs.

Make sure the NUC is connected to a large battery backup, 1500VA or larger. I would suggest having all your network infrastructure together and on the same battery unit, however if you have substantial network hardware you need a substantial UPS. Mine weighs as much as I do and can run the entire network plus several POE cameras and the house server for hours.

Keep a written log of the entire setup process if you can. It makes it way easier to find mistakes, and to replicate your work far quicker if needed. I had to do my server setup 3 times, but I started from scratch knowing that, by the third time it went from days to minutes since I could just copy/paste shell commands from the log, and looking it over was a great learning tool.