tl;dr Scroll down to the “Needs” section where I list what phrases I need and how most don’t work.

Background

By the end of this week, when all my speakers have finally come in the mail, I’ll be fully transitioning over to only using Home Assistant Voice Preview Edition after 10 years of Google Home and Alexa.

I currently use a combination of:

- Amazon Echo Dot for basic smart home tasks.

- Google Home Mini for music and broadcasts.

- Google Chromecast Audio for music to speakers over a 3.5mm cable.

Reasons I use both

- Alexa announcements broke this year because of added noise cancellation. Announcements end up getting chopped up to the point where you couldn’t understand them, or they’re mostly slience. Google Home Mini is also louder than my 2nd Gen Echo Dots.

- My 1st gen Google Home Mini devices are super slow for smart home tasks. I mean, for the first year of ownership, they couldn’t even give me the time. On the other hand, their ability to play music is wonderful. I wouldn’t use them if not for that feature.

- I like that Alexa lets me find my phone and works 99.9% of the time no matter what I ask it. It even has a feature where you can whisper to it, and it whispers back as well as the ability to talk to another Alexa device (intercom) by using the “drop-in” keyword. You can even call people’s phones when you’re still in bed!

Needs when using only Home Assistant Voice PE

Voice Assistant phrases I use just about every day:

- “What time is it?”

- “What day is it?”

- “What’s the temperature [outside]?”

- “Turn on Exhaust Fan for 10 minutes”.

- “Broadcast (or Announce) Dinner’s ready!”.

- “Drop in on Kitchen”: intercom feature.

- “Find my phone” feature (rings the phone by calling it or playing an alarm).

- “What did you do?” for recalling the last action.

It’s more rare to ask “what sound does a badger make” or “what’s 5 times 8042?”. While fun, those are effectively irrelevant because I can always use my phone. The other ones, I want immediate feedback.

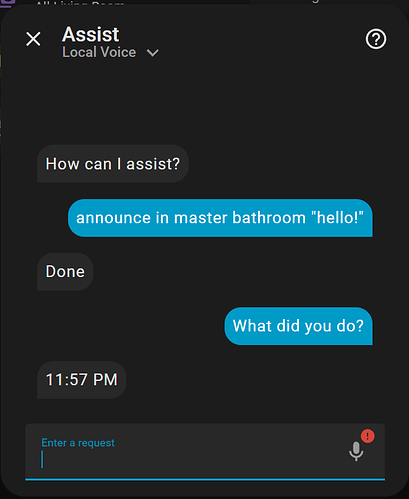

Another issue, Voice Assistant always does weird stuff like this:

What it does today

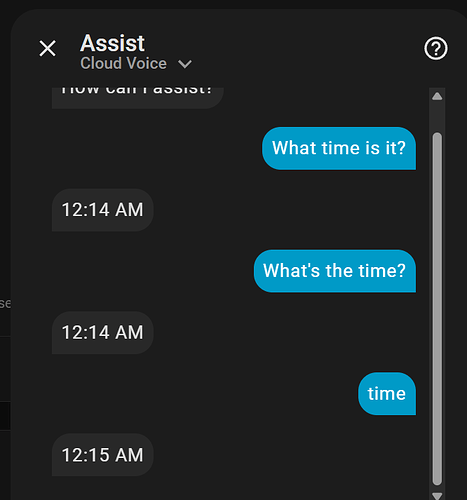

1. “What time is it?”

1. “What time is it?”

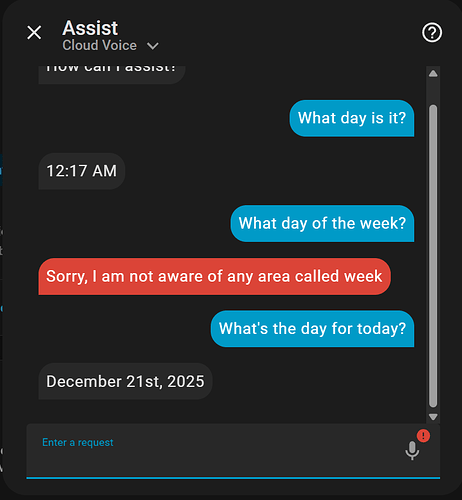

2. “What day is it?”

2. “What day is it?”

This question always does some wild stuff when it runs directly on the AI without local.

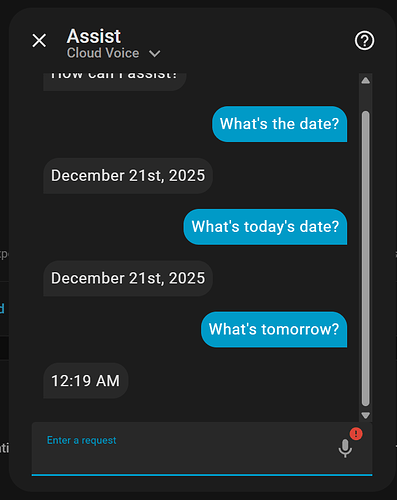

2.1. “What’s the date?”

2.1. “What’s the date?”

This works, but any deviation gives weird responses again.

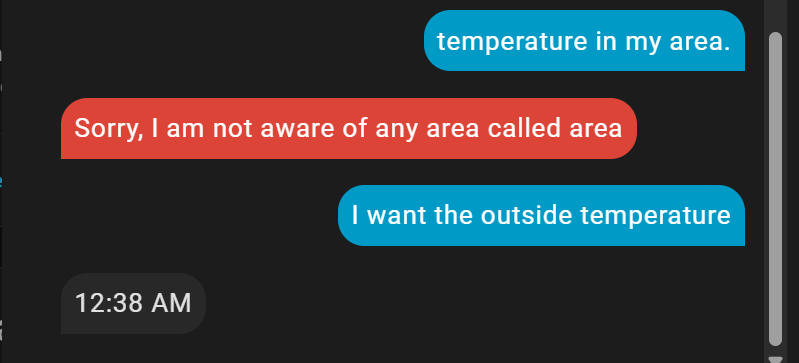

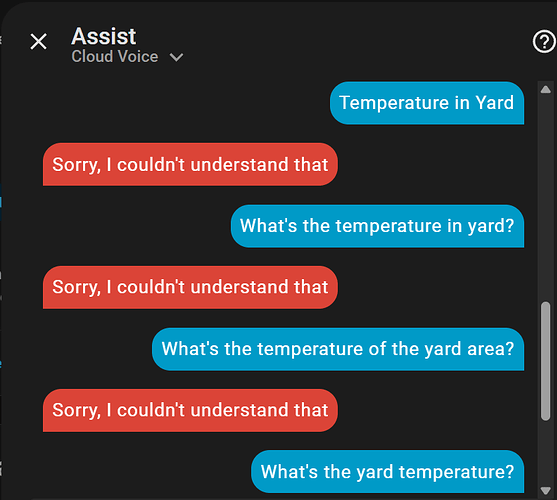

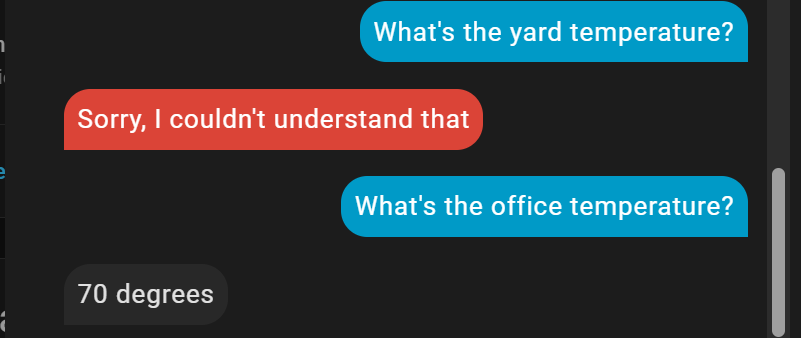

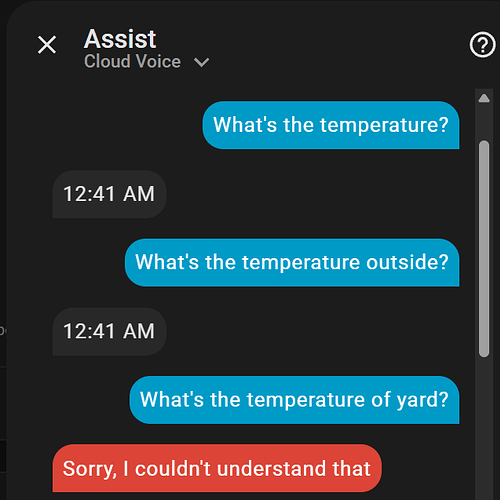

3. “What’s the temperature [outside]?”

3. “What’s the temperature [outside]?”

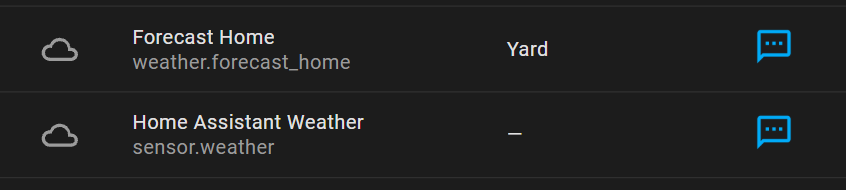

3.1 Exposing weather entities

3.1 Exposing weather entities

It appears I didn’t have any weather entities exposed, so I did that:

And it still didn’t work ![]() :

:

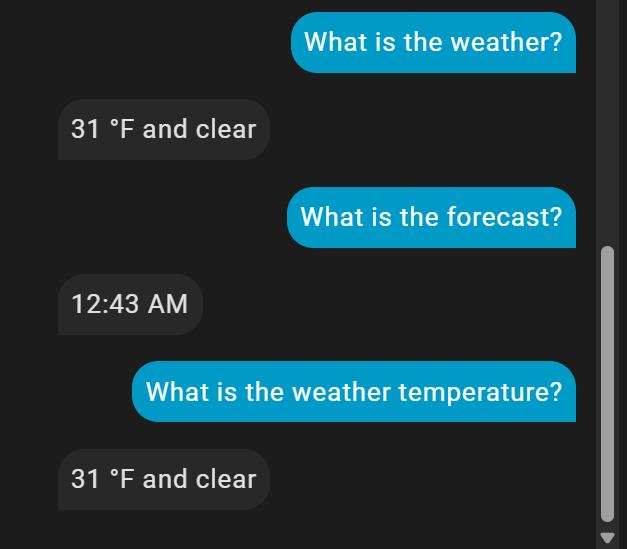

3.2. Ask for the weather

3.2. Ask for the weather

After asking for the weather directly, now I’m getting the outside temperature.

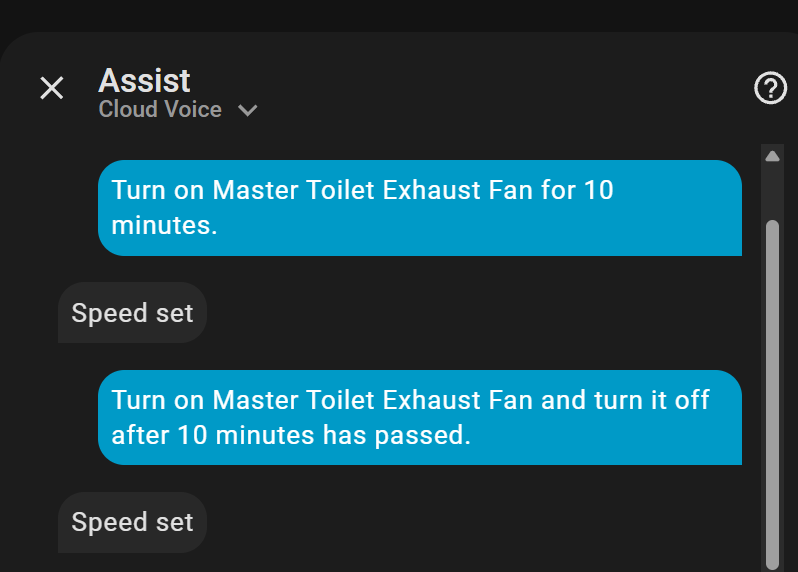

4. “Turn on Exhaust Fan for 10 minutes”.

4. “Turn on Exhaust Fan for 10 minutes”.

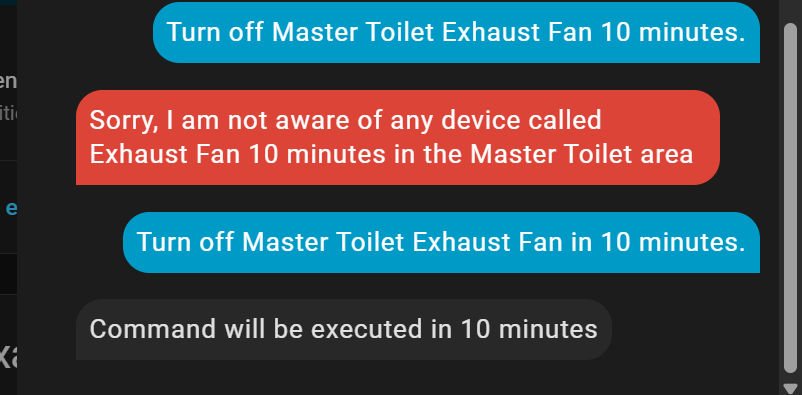

4.1 “Turn off Exhaust Fan in 10 minutes.”

4.1 “Turn off Exhaust Fan in 10 minutes.”

Since turning it off on a timer using the “Turn On” command didn’t work, I tried something simpler:

I’ll find out in 10 minutes. Timers like these work correctly in my testing earlier this month.

UPDATE: It worked.

It sucks to have to turn it on first and then ask it to run a command in 10 minutes rather than a 2-for-1 situation.

5. “Broadcast (or Announce) Dinner’s ready!”.

5. “Broadcast (or Announce) Dinner’s ready!”.

It’s late, so I’ll have to do this another day. As far as I understand, this does not work.

My earlier test tonight, trying to announce to a single room, also didn’t work.

6. “Drop in on Kitchen”: intercom feature.

6. “Drop in on Kitchen”: intercom feature.

Simply doesn’t work. There’s no voice transfer or recording functionality sadly.

Maybe Sendspin will make this possible in the future, but I’d like something working now.

7. “Find my phone” feature.

7. “Find my phone” feature.

It can’t tell what I’m asking.

It’s also late, so I can’t verify if I can announce to my phone. The most I know I can do is probably send a notification.

8. “What did you do?” for recalling the last action.

8. “What did you do?” for recalling the last action.

It has no clue what I’m asking when I say this.

It’s useful to have this to check what it did if it says “yes, I did that thing you wanted” and the thing didn’t happen. Now you have to wonder what actually happened.

Conclusion

Pretty much all Voice Assistant can do for me is give me the time and “date”, not “day”.

Music Playback

The other thing I needed from Voice PE is streaming music, and it does that fantastically well with Music Assistant!

While the onboard speaker is complete and utter trash, not useful unless it’s right by your ear, I’ve attached every single Voice PE to a nice speaker over 3.5mm. This also replaces all my Google Chromecast Audio devices!