I’m looking for help debugging this odd issue.

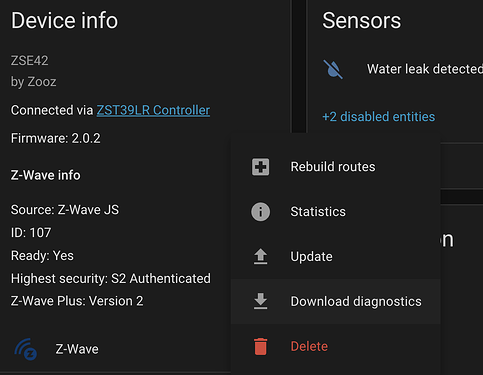

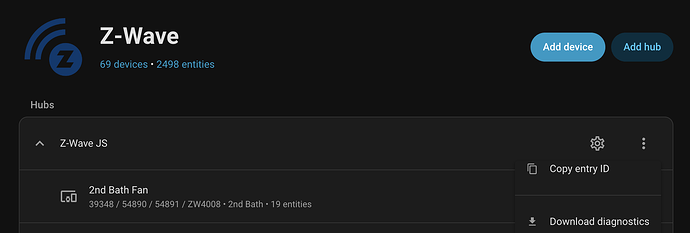

Running HAOS core 2025.12.2 and zui add-on 6.1.2 (zui 11.8.2/js 15.17.1)

(n.b. I know I can work around this with a template sensor)

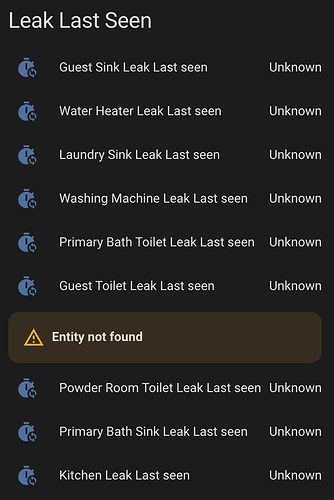

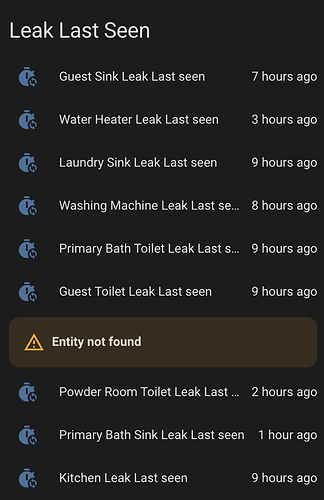

I have a bunch of Zooz ZSE42 leak sensors (battery, so mostly asleep). I have an automation that checks the last_seen times a few times a day to make sure that they are still waking up every so often (meaning they are not dead).

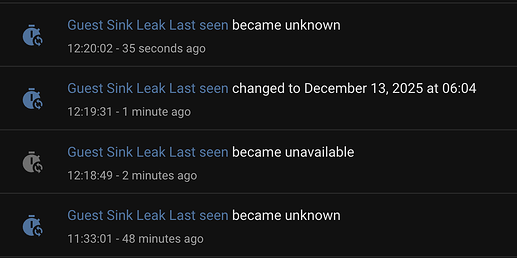

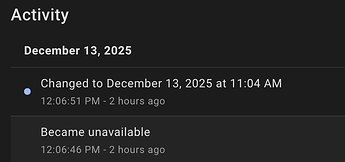

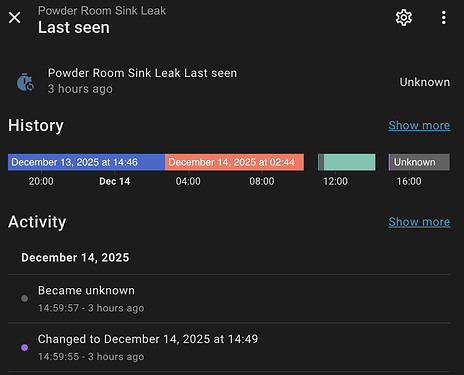

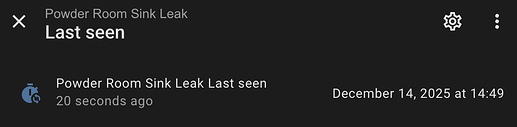

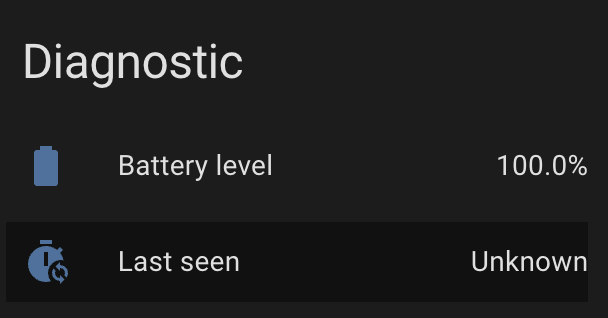

When I restart the zwave-js ui add-on (or update it) the last_seen times change to unknown. I thought that didn’t make sense – after all the last_seen time is still the same as it was before.

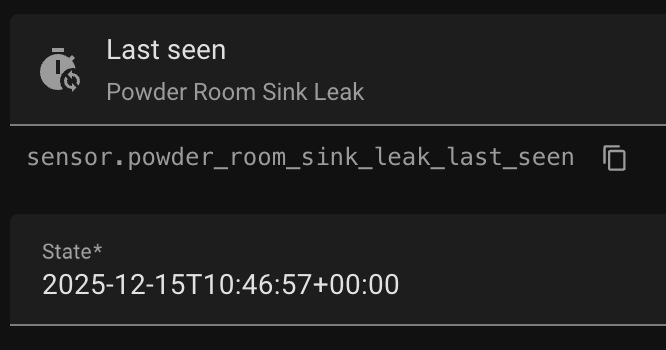

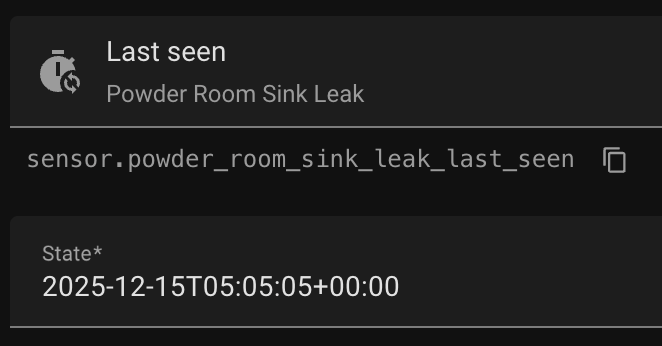

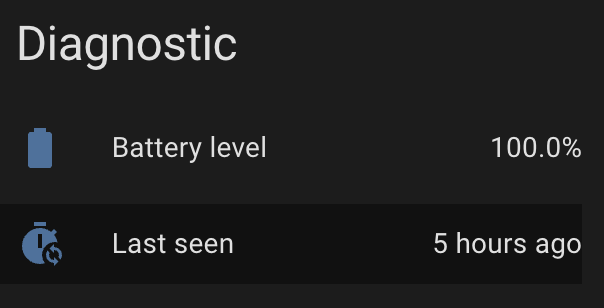

But, notice that when I restart zwave-js ui the last_seen time actually does come back but then it changes to unknown.

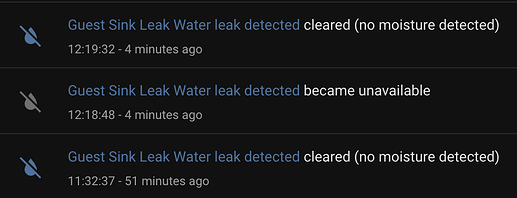

And to be clear, other states do come back:

Note: Restarting HA will bring back the old last_seen times.

Debugging:

I have too much activity on my HAOS system, so to debug this I started up a new instance of HA:stable and zwave-js ui:latest in docker

And now I a cannot reproduce. Restarting the zwave-js container and the last_seen times come back and it does NOT then change to unknown. (Of course it works fine there… ![]() )

)

Now what?

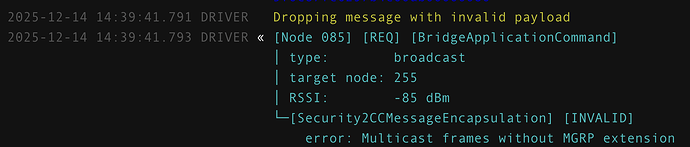

Back to HAOS production machine with zwave logging enabled.

That “Guest Sink” is node 52, so maybe the debug logs will help, but not seeing any lastSeen updates in the websocket messages:

$ fgrep WSMessage home-assistant_zwave_js_2025-12-13T20-20-08.405Z.log | grep lastSeen | wc -l

71

$ fgrep WSMessage home-assistant_zwave_js_2025-12-13T20-20-08.405Z.log | grep lastSeen | grep '"nodeId":52' | wc -l

0

There is a log entry for node 52 at 12:20:02 (when it changed to unknown, but doesn’t include any lastSeen updates.

$ fgrep -B 1 '"nodeId":52' home-assistant_zwave_js_2025-12-13T20-20-08.405Z.log | grep -C 1 12:20

--

2025-12-13 12:20:02.056 DEBUG (MainThread) [zwave_js_server] Received message:

WSMessage(type=<WSMsgType.TEXT: 1>, data='{"type":"event","event":{"source":"node","event":"statistics updated","nodeId":52,"statistics":{"commandsTX":0,"commandsRX":0,"commandsDroppedRX":0,"commandsDroppedTX":0,"timeoutResponse":0,"lwr":{"repeaters":[10],"protocolDataRate":2}}}}', extra='')

So, now what?

How can I debug this further? Is there a way to figure out what is making that state change to “unknown”?

Is last_seen just another entity that is set by zwave-js? That is HA should retain the state unless told otherwise?