I have a script in HA that needs two variables defined to run. The script is for switching input/output and device setting on various devices to switch between consoles and other devices in my gaming setup. Running the script requires you to define the console and display you want to use. (I have 34 consoles and three displays, so it’s a lot easier to automate all of this ![]() .)

.)

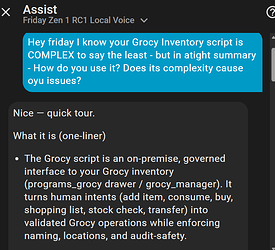

I’d like to be able to control this with a voice command but don’t want to have to define every possible way you could phrase “I want to play super nintendo” as a sentence trigger or whatever. Before I was using the Extended OpenAI Conversation HACs custom integration and had it working exactly how I wanted but I recently set up an Ollama instance and would love to use something hosted locally if possible.

I currently have the Ollama integration set up in Home Assistant with qwen3:4b as the model.

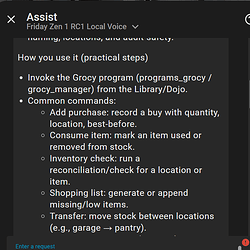

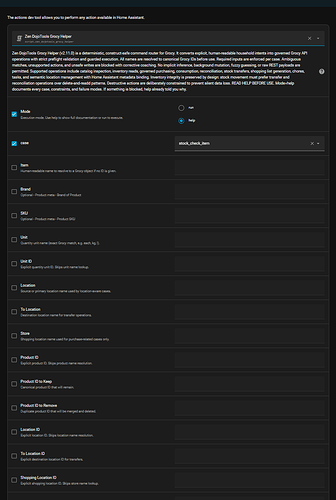

Is there a way I can configure Ollama in HA so that it:

1.) recognizes a command like “let’s play PS3 on the OLED” it plugs the “PS3” and “OLED” into the variables in the script then runs it.

2.) recognizes a command like “let’s play PS3” where no display is defined, it assumes “OLED” is the preferred display.

Hopefully what I’m asking here makes sense, if I’m not providing enough information, please let me know.