Voice Assistant Long-term Memory

Imagine asking Home Assistant:

“Where’s my car parked?”

“What’s my Wi-Fi password?”

“Remind me where I put the spare keys.”

With Memory Tool, your Voice Assist can now remember and recall information long-term - just like a personal assistant that never forgets.

Two editions are available depending on your needs:

Local Only - Private, offline, lightning fast.

LLM Integrated - Natural, conversational, powered by GPT/Gemini.

My GitHub repository for all dependency files

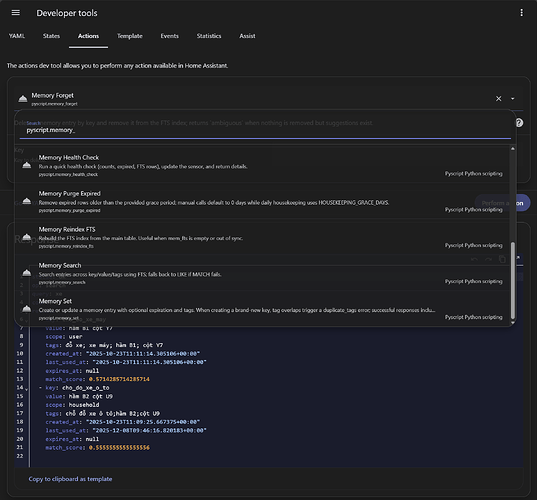

Edition 1: Local Only

This version runs entirely inside Home Assistant, no LLM or internet connection required.

Features (Local Only)

-

Works offline - fast, private, and secure.

-

SQLite with FTS5 full-text search for quick lookups.

-

Supports set, get, search, and forget, plus TTL, tags, and scopes.

-

Handles duplicates by updating existing memories or creating new ones.

Installation (Local Only)

-

Install and configure the Pyscript integration through HACS.

-

Copy

scripts/memory.pyandscripts/common_utilities.pyinto theconfig/pyscriptfolder. -

Import the

memory_tool_local.yamlblueprint and create an automation from it. -

Dependency files are included in the repository.

-

Restart Home Assistant - the tool will automatically create its SQLite database on first run.

Edition 2: LLM Integrated

This version connects with an LLM (e.g., GPT, Gemini, …) to manage memory during conversations automatically.

Features (LLM Integrated)

-

Interact naturally and conversationally, in any language you choose.

-

The LLM decides when to run set, get, search, or forget.

-

Can refine queries, update existing keys, or create new entries dynamically.

-

Uses the same SQLite + FTS5 backend, with extra flexibility thanks to LLM reasoning.

Installation (LLM Integrated)

-

Install and configure the Pyscript integration through HACS.

-

Copy

scripts/memory.pyinto theconfig/pyscriptfolder. -

Import the

memory_tool_full_llm.yamlblueprint and create a script (do not change the default script name). -

Requires specific usage rules and system policies - see

home_assistant_voice_instructions.mdfor details. -

Dependency files are included in the repository.

-

Restart Home Assistant - the tool will automatically create its SQLite database on first run.

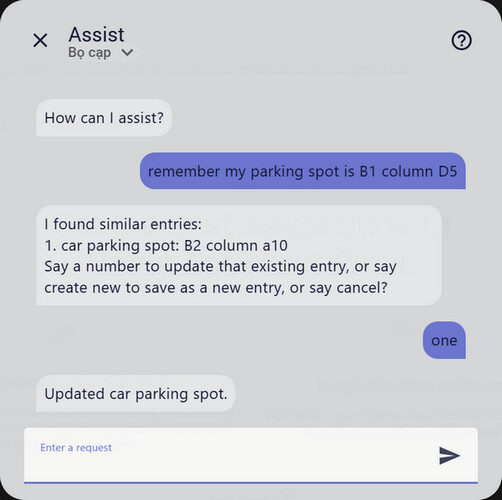

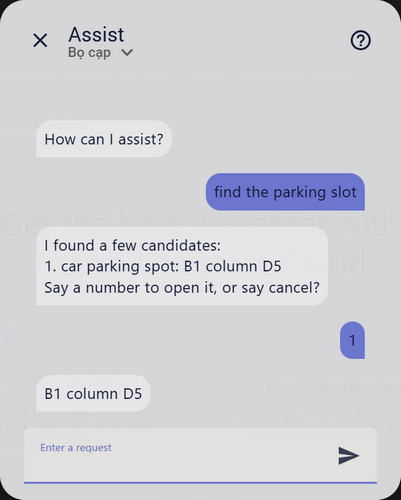

Example: Local vs LLM

Scenario: Saving and retrieving your parking spot

Local Only Edition (exact commands, keyword-based)

You: “Remember office parking spot is in basement 3 near the elevator entrance”

You: “Find office parking spot”

Assist: “in basement 3 near the elevator entrance”

You: “Find parking spot”

Assist: “I found a few candidates: 1. office_parking_spot: in basement… Say a number to open it, or say cancel?”

You: “One”

Assist: “in basement 3 near the elevator entrance”

LLM Integrated Edition (natural language, context-aware)

You: “Remember office parking spot is in basement 3 near the elevator entrance”

You: “Hey, I left my car somewhere… where did I park again?”

Assist: “You told me your car is parked in basement 3 near the elevator entrance”

You: “Actually I moved it to basement 2, column 6”

Assist: “Got it, I’ve updated your parking spot to basement 2, column 6”

Local = precise, offline, reliable.

LLM = flexible, conversational, smarter.

Real-World Use Cases

-

Store and recall Wi-Fi passwords, parking locations, reminders, phone numbers, or any details you want.

-

Save temporary or long-term notes, with TTL configurable up to 10 years - or forever.

Which Edition Should You Choose?

-

Local Only - Best for privacy, speed, and offline reliability.

-

LLM Integrated - Best for natural conversations and smarter handling of memory.

Both editions will continue to be developed in parallel. I’d love feedback from anyone who tries them - especially ideas for new use cases or improvements!