I give up and undoubtedly can’t see the forest because of the trees. I turned off the port forwarding, and left the configuration.yaml code to:

http:

base_url: https://solarczarhomeassistant.duckdns.org

server_port: 443

ssl_certificate: /ssl/fullchain.pem

ssl_key: /ssl/privkey.pem

Chrome/Safari give an ERR_SSL_PROTOCOL_ERROR

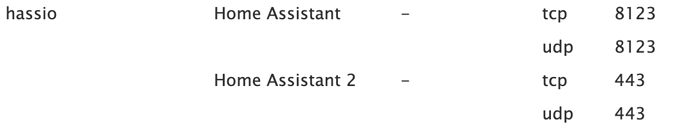

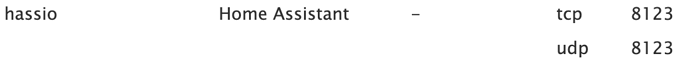

If I remove the server_port: 443 from the yaml file, then same . I’ve tried 2 port forwarding rules to make sure I’m understanding the verbiage of the host vs forwarding port.

neither have worked. I went so far as to turn on both…

I can get to https://solarczarhomeassistant.duckdns.org:8123 to HASS, but not without the :8123 port address. I’ve turned off AdGuard, as I don’t think I understand the “rewrite” specifics. I’ve turned on NGINX, with no joy. I know this should be simple, and I’m struggling.