Can you elaborate? Is this a real integration? I think it would be useful service call for those integrations that do not retry automatically.

The guide for getting the secret key does not work. I think it needs to be updated. Maybe a guide to sniff it out

Sure, the github is here: Retry

I’m currently using it like this:

action: retry.actions

metadata: {}

data:

retries: 5

backoff: "[[ 15 * 2 ** attempt ]]"

validation: >-

[[ state_attr(entity_id, 'temperature') == temperature and

is_state(entity_id, hvac_mode) ]]

state_delay: 5

repair: true

retry_id: "climate.wiser_livingroom_hvac_temperature"

sequence:

- action: climate.set_temperature

target:

entity_id: climate.wiser_livingroom

data:

temperature: 18

hvac_mode: "heat"

Edited to remove my templates

Thanks for the info.

It retries actions not reloads integrations.

However it looks really interesting and I can think of several use cases for it when I try to reload/reboot devices and sensors.

Your example is informative - cheers

For missed commands, it’s probably the hub having disconnected from WiFi. I solved it with a simple wait template I use as the very first action in automations:

actions:

# Check Wiser Hub is not offline and wait for it to come back online if it is

- wait_template: "{{ is_state('binary_sensor.wiser_hub_status', 'off') and is_state('binary_sensor.wiser_hub', 'on') }}"

Create a Ping binary sensor AND a template binary sensor as follows:

# Wiser Hub Status binary sensor

- name: Wiser Hub Status

unique_id: C091D37C-4A26-48C2-BDA6-94F7B8B5568B

device_class: problem

icon: >-

{%- if is_state('binary_sensor.wiser_hub_status', 'on') -%}

mdi:router-wireless-off

{%- else -%}

mdi:router-wireless

{%- endif -%}

state: >-

{%- if is_state_attr('sensor.wiser_hub_heating_operation_mode', 'last_update_status', 'Success') -%}

off

{%- else -%}

on

{%- endif -%}

Years ago, when the disconnection problems were really frequent, I had automations that acted up until I did this.

If you need to reload an integration use:

- action: homeassistant.reload_config_entry

data:

entry_id: 885ed8abb7ebf3e4cea8627a61061659

The entry_id is found by searching for the integration name in the file:

\config\.storage\.storage\core.config_entries

e.g. I am restarting my Airthings BLE integration when it’s entities are unavailable for a while.

{

"domain": "airthings_ble",

"entry_id": "885ed8abb7ebf3e4cea8627a61061659"

}

Thanks Robert,

I already know that and use it. But retrying an action is something new to me and I think I would find it useful.

Again Thank you reminding me - cheers Mike

Personally I don’t use the Retry HACS integration. I installed it years ago thinking I might find a use for it, but I’ve always just created a loop in my automation to check for states, where I need to retry something multiple times.

However, I can see how the Retry integration would be useful for some people.

Both methods are probably equally good options. I just favour the native option personally.

Yeah it won’t re-load the integration automatically but there is an error key in the config. I prefer manual intervention which is why I didn’t use it in my example but you could have it fire an action to reload wiser integration when it fails the count of retries.

Relevant part of the README.

on_error parameter (optional)

A sequence of actions to perform if all retries fail.

Here is an automation rule example with a self remediation logic:

alias: Kitchen Evening Lights

mode: parallel

trigger:

- platform: sun

event: sunset

action:

- action: retry.action

data:

action: light.turn_on

entity_id: light.kitchen_light

retries: 2

on_error:

- action: homeassistant.reload_config_entry

data:

entry_id: "{{ config_entry_id(entity_id) }}"

- delay:

seconds: 20

- action: automation.trigger

target:

entity_id: automation.kitchen_evening_lights

Your most welcome as well.

I was using loops the same as robert before but ended up preferring this solution in the end but both solution have the same outcome. I like the repair notices as it gives me some indication if something is starting to fail really bad.

The wait solution is very clean though might have to try that myself.

Hi there,

I’ve been going through my Watchman log for issues with entities. The following two entities related to Drayton Wiser are coming up as unknown:

button.wiser_boost_hot_water

button.wiser_cancel_hot_water_ overrides

Both entities are found - is it just showing as unknown because it doesn’t have any data against it (e.g. not used since the entity was created?).

Thanks!

Thanks for sharing.

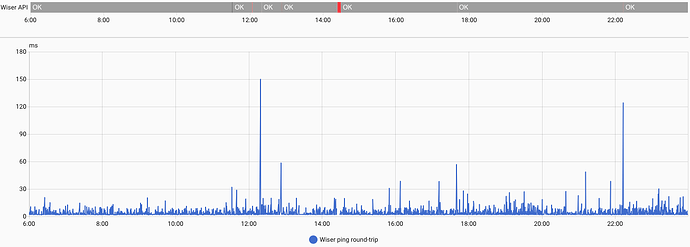

Inspired by your approach, I set up a hub ping sensor (1 ping every 10s) and discovered it spikes occasionally from <10ms to >100ms. I’m guessing the spikes are when the hub CPU is struggling.

With this insight, I augmented your health check logic to include ping spikes (>50ms), in a GUI template sensor (name: Wiser API, class: Problem):

{% set ping_ms = states('sensor.wiser_lan_round_trip_time_average') %}

{% set ping_problem = ping_ms in ['unknown', 'unavailable', 'none']

or ping_ms | int(999) > 50 %}

{% set poll_failure = not is_state_attr(

'sensor.wiser_heating_operation_mode',

'last_update_status',

'Success'

) %}

{{ ping_problem or poll_failure }}

With hindsight, my prior Wiser automation fragility was likely due to sending commands while the CPU was overloaded and not waiting for a successful hub poll before evaluating the results.

So I created two guard scripts to wrap around Wiser commands in retry loops: a pre-flight Wiser Wait for Healthy API check (similar to yours), and a post-flight Wiser Wait for Next Successful Poll check.

Here are the scripts if folk are interested.

alias: Wiser Wait for Healthy API

description: >-

Blocking guard script that waits for the Wiser hub to be reachable and healthy.

Wait for a fresh ping if over half way through the 10s ping cycle.

mode: queued

max: 50

sequence:

- wait_template: >

{{ as_timestamp(now()) -

as_timestamp(states.sensor.wiser_lan_round_trip_time_average.last_updated)

<= 5 }}

- wait_template: "{{ is_state('binary_sensor.wiser_api', 'off') }}"

alias: Wiser Wait for Next Successful Poll

mode: parallel

max: 50

sequence:

- variables:

last_poll: "{{ states.sensor.wiser_heating_operation_mode.last_updated }}"

- wait_template: |

{{ states.sensor.wiser_heating_operation_mode.last_updated != last_poll }}

- wait_template: |

{{ is_state_attr(

'sensor.wiser_heating_operation_mode',

'last_update_status',

'Success'

) }}

Thanks again for inspiring me (and to ChatGPT for cranking out code so fast!). I’ll report back in a couple of weeks on how effective this is.

What happens if you press one of them?

I would be careful about overriding the default ping of 30 seconds. IIRC pinging the hub too regularly (I’m fairly sure this was covered in this thread a few years ago - you would need to search) can cause problems of it’s own and cause disconnects itself by overloading the hub.

My ping sensor is using the default of 30 seconds and sends 2 pings.

I considered that and reasoned a single ping is v low load. Its 2-4ms typical round-trip times seem to validate that.

Where I suspect people went wrong in the past is using the defaults of 5 pings and 180s “Consider home interval”, as that hammers the hub repeatedly with 5 closely-spaced workloads and takes 3 minutes to detect a problem.

What’s your rationale for 2 closely-spaced pings every 30s instead of spacing them evenly (i.e. 1 every 15s)? The latter should be gentler on the hub and provide more granular telemetry, for the same total pings. Or put another way, what extra value does the second ping provide?

You have misunderstood that Consider home interval is only for the ping device tracker entity, if you enable it, which you don’t want for a ping to a hub.

Since the Ping sensor was moved to the UI, it defaults to 30 seconds with the only way of changing that being to create your own automation and disable the Polling for updates setting. This was done intentionally by the developers to stop people pinging things too frequently (especially cloud services) e.g. every 10 seconds. If you haven’t created an automation to do that, you are on the default of 30 seconds. Putting 10 in the “consider home interval” does not make it run every 10 seconds, nor does leaving it at 180 make it take 3 minutes to detect a problem. It’s for the device tracker entity only, to stop the state flapping, which is disabled by default.

Please see the Ping documentation here.

I use two pings for all my ping sensors. The second one is for redundancy to make sure the first one wasn’t missed or reported incorrectly.

You’re right. “Consider home interval” is irrelevant for determining hub health. Thanks for correcting me.

Let me clarify my understanding of the other two ping choices, as I wouldn’t want others reading this thread to conclude that a 10s ping is risky for a hub.

Background: A single ping is a near-effortless workload, even for a Wiser hub. It’s 32 bytes of low-compute data. That’s 100x smaller than the 3k-10Kb polling response that the hub sends to HA every 30s for a typical home, not to mention the CPU load of crafting the poll response. If a hub’s CPU is severely loaded, a ping request could topple it but it’s an edge-case risk.

In HA, there are 2 Ping settings to consider for detecting Wiser hub health:

-

Ping interval: Default is 30s, with a minimum of 10s (for the reasons @robertwigley explains above). Shorter intervals give fresher hub state telemetry, and the 10s minimum is unlikely to affect the hub’s performance.

-

Ping count is how many 1s-spaced pings are sent in each “interval”. Default is 5 pings. Ping count will have more impact on a hub than ping interval, because the 1s spacing gives the CPU less time to recover. Although risk is low given the tiny footprint of a ping.

A more compelling reason to choose a small ping count is responsiveness: if a hub falls offline, a ping sensor takes 4s to detect this condition with the default ping count of 5 ping, whereas a ping count of 1 or 2 reduces the detection delay to 0s or 1s respectively.

Does that sound right, or have I missed something?

On a related note, the stability of my Wiser automations has been transformed by the hub health check scripts I shared above. Here’s an example of the health sensor detecting when the hub slowed down or fell offline yesterday (“Wiser API” bar above the graph):

Thanks again @robertwigley for inspiring me to go down this path.

Is it possible to boost/override a room temperature using the REST API, the same as you can from the Wiser app or HA?

I am writing a small python app, which presents HA features on a touch screen. Adding a tab for my Wiser rooms, I can read if the TRV is currently requesting heat, what the current temperature is and what the target temperature is.

I have added a + and - button for each room in an attempt to allow a short term override until the next point in the schedule, but it does not work.

There seems to be two devices available.

"climate.wiser_living_room": {

"entity_id": "climate.wiser_living_room",

"state": "auto",

...output cut...

"attributes": {

"current_temperature": 19.7,

"temperature": 15.0,

and

"sensor.wiser_lts_target_temperature_living_room": {

"entity_id": "sensor.wiser_lts_target_temperature_living_room",

"state": "15.0",

I have tried making a POST request to the REST API at /api/states/<entity_id>, setting the temperature in the attributes for the climate device or the state (converting to string) for the sensor device. Both appear to be successful, returning a 200, but it does not do anything. When the app next polls HA for the Wiser data, it comes back with the previous value. It looks like these two devices may be read only as far as the API is concerned.

Is overriding a temperature a feature? There is a button.wiser_boost_all_heating which I can call for all radiators, but none for individual ones.

You need to use the service call rest api to control things like this.

Thanks, that does look to be the right area. The documentation suggests sending data like this will work:

boostData={ "data": {

"time_period": 60,

"temperature_delta":2

},

"target":{

"entity_id": "climate.wiser_study"

}

}

Is that the right format it is expecting? It doesn’t match data I get if I call a get on /api/services.

Python is throwing some errors at that JSON, but I think that is a python thing rather than a HA thing. It can be awkward with nested JSON and POST requests.